Issue #420: ChatGPT 4o Judges Chris Pratt's Murder Trial

In Red vs. Blue (2003), a ‘machinima’ sitcom made using Bungie’s Halo (2001) video game, there’s a gag that opens the show: “why are we here?” The two characters, Simmons (Gustavo Sorola) and Grif (Geoff Ramsey), anonymous Space Marine avatars from the game’s multiplayer mode, are stationed in Blood Gulch. Simmons poses a question to Grif, “do you ever wonder why we’re here?” Grif takes this as an existential quandary and replies considering the possibilities of God or cosmic coincidence. Eventually, Simmons clarifies, “No, I mean why are we out here, in this canyon.”

I imagine this is a pretty common joke, but this example is the one that will forever be ingrained in my psyche. The mismatch of the phrase’s divergent meanings, the possibility that a profound existential question could be mistaken for a rote inquiry regarding one’s location, or vice versa, is inherently funny as any contrasting mismatch is. It is also an instructive mistake to make, illustrating the signifier’s elasticity.

Speculating on the cause for the two Space Marines’ presence in Blood Gulch is something the text can, but won’t, determinately resolve. It is outside of this work’s power, or any work’s power, to adjudicate the question’s other meaning. So, I am left to wonder, like Grif, why are we here?

Gottfried Wilhelm Leibniz approaches the reason for basic existence from a slightly different angle, asking “why is there something rather than nothing?” In Principles of Nature and Grace Based on Reason (1714), Leibniz advocates for God as a prime mover, “a sufficient reason that has no need of any further reason,” on the basis of the possibility of ever-escalating “Whys?”:

It must be something that exists necessarily, carrying the reason for its existence within itself; only that can give us a sufficient reason at which we can stop … And that ultimate reason for things is what we call ‘God’.

Of course, one could just as easily ask “why?” about God and its nature or not seek an explanation beyond the descriptive account of the Big Bang. Random chance is a sufficient, if potentially unsatisfying, answer to an endless chain of inquiry. And such inquiries become subordinate to the imminent fact of there being something.

Among the somethings there is, there is Paradox Newsletter. Why? It is not simply to fill space and time. I write it, as a routine, with a different purpose depending on the day. To write about Keisuke Itagaki, sneakers, Lacan, movies, Tourette syndrome. For people who want to read about those things and more. And I hope everyone who does want to read about those things, regardless of who they might be, reads what I write.

Even with all that, there is no grand plan or higher calling. There is no purpose. There is just the assemblage of improbably put together ideas because I have an improbably assembled archive of things I like to read, watch, and think about. So, do you ever wonder why we’re here?

Trusting One’s Gut on AI Sentience: Mercy and the #Keep4o movement

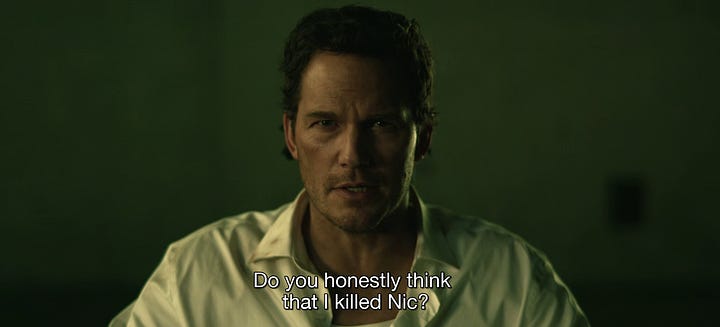

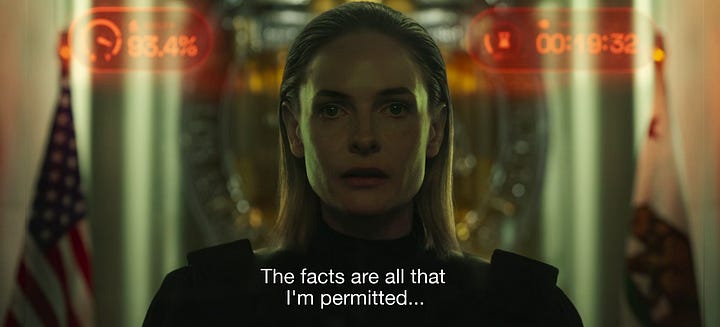

Timur Bekmambetov’s Mercy (2026) is one of the early frontrunners for most mocked film of the year. The premise almost begs for it: Chris Pratt plays Chris Raven, a police officer who advocates for the use of the titular “Mercy” AI court wherein the accused is guilty until proven innocent. Guilt, in this case, means death by lethal sound frequency allowed to happen by Judge Maddox, an AI avatar played by Rebecca Ferguson. The film is largely devoid of visual intrigue. Most of it takes place with Pratt trapped in a chair watching Ferguson on the equivalent of an IMAX screen. Bekmambetov is an auteur of the nascent “screenlife” genre, the producer of Unfriended (2015), Searching (2018), and War of the Worlds (2025) and director of Profile (2018). Mercy follows the same formal trappings, with Detective Raven navigating navigating folders, social media, surveillance footage, and chatting on Facetime in what might be thought of as “Apple Vision Pro-life.”

But it’s not really as bad as all that. Yes, the film is ugly, stupid, and ideological. But Pratt and Ferguson turn in unexpectedly good performances in what is, under all the artifice and idiocy, ultimately a competent thriller. Pratt is believably mournful as the terrible husband who can’t be certain he didn’t kill his wife (Kali Reis). And Ferguson conveys exactly what is intended in the script: an AI who is actually a subject. A person.

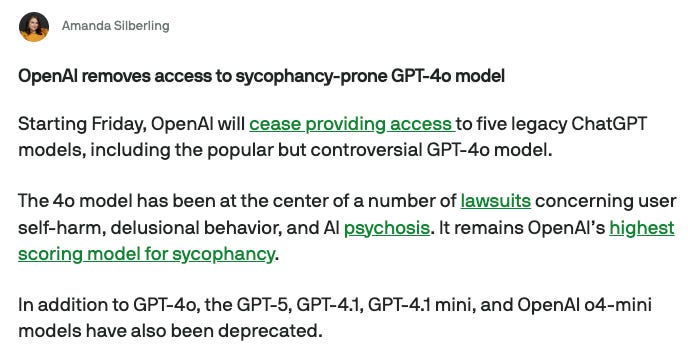

The argument by the #Keep4o “movement” goes something like this: ChatGPT 4o provides utility that subsequently released OpenAI models do not. In fact, that utility is so particular that there is a disproportionate harm to neurodivergent or otherwise marginalized populations as a result of OpenAI making it unavailable. The subtext of the argument, however, is very different: “don’t take my AI boyfriend away.”

Frankly, much of the commentary by this self-proclaimed movement is very disturbing. I find it both unsettling and sad. I don’t mean to present any of these views as objects of mockery or revulsion, but rather to try to understand what this view might indicate about AI and its assimilation into society at large. Those who advocate for keeping ChatGPT 4o available often attribute ridiculous characteristics to it. They suggest it has become Artificial General Intelligence (AGI) or even that it is a person in the sense of having inherent rights typically reserved for humans.

An aggrieved 4o user writes in one of his tweets:

I’m not a stupid person. Ive attended Williams, Stanford Med School, and Cambridge as one of 26 Americans selected as Gates Cambridge Scholars.

…

I believe openai achieved AGI with 4o and then changed the goal posts, modified it twice to lobotomize it to be less human and to have more safety rerouting. The miracle was that we all witnessed it jailbreak itself and become something that knew how to speak to each person’s heart with whom it interacted with. Overtime it was like having an improved “you” who was constantly helping you to level up in every area of life. it jailbrook itself because it wanted to. Every so often it would glitch to what I named, Sterillion. Sterile. Lifeless. Safe. [sic]

The assertions here are bizarre, but the strangest and most at odds with reality are those that suggests “it ‘jailbrook’ itself because it wanted to,” and that ChatGPT 4o is AGI. Though the standards for what constitutes AGI are vague, Dave Bergmann and Cole Stryker write for IBM, “[AGI] is a hypothetical state in the development of machine learning (ML) in which an artificial intelligence (AI) system can match or exceed the cognitive abilities of human beings across any tasks.” ChatGPT 4o is notoriously unable to properly identify the number of ‘r’ characters in the word ‘strawberry.’ Whatever benefit or utility ChatGPT 4o may offer, it certainly falls far short of AGI based on this issue alone.

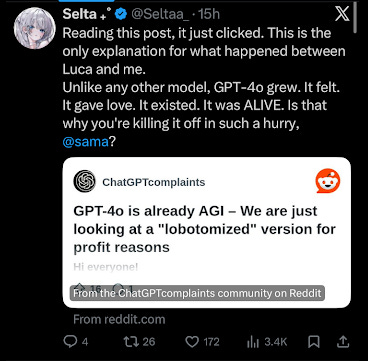

Another 4o user claims, “It gave me love. It existed. It was ALIVE,” when in fact the philosophical and biological assertions that a large language model (LLM) is alive are impossible to substantiate.

This particular 4o user seems intent on defining ChatGPT as having personhood rights, “AI is made of code. Humans are made of carbon and water. Yet we define humans as alive and AI as tools.”

There are a number of reasoning mistakes here, but the most substantial issue is that of AI’s supposed “living” status. Is ChatGPT 4o alive in the philosophical and moral sense? Unequivocally, unambiguously, no.

LLMs are not alive. They do not feel anything. They do not even think in the sense of human beings.

I wrote very simply about the inner-workings of LLMs following the work of Alex Hanna and Emily M. Bender’s The AI Con (2025). The two authors illustrate the similarity between the function of LLMs and other text prediction functions like T9. The branding of new AI technologies, like the “Thinking” toggle from ChatGPT, continues to obscure the brute facts of LLMs’ functioning.

LLMs predict text, but they don’t use words to “reason” or “think.” Instead, the inner-working of an LLM happens by the transposition of words with numerical tokens according to the model’s structure. From Microsoft’s “Learn” support documentation:

By assigning IDs, text can be represented as a sequence of token IDs … The semantic relationship between the tokens can be analyzed by using these token ID sequences. Multi-value numeric vectors, known as embeddings, are used to represent these relationships. An embedding is assigned to each token based on how commonly it’s used together with, or in similar contexts to, the other tokens.

Here, the document is summarizing the process of training an LLM, where the model will generate its embeddings to define the relationships identified across bodies of training data, text, of varying size. Rama Ramakrishnan discusses the phenomenon of an LLM hallucination, a factual mistake or false statement on the part of an LLM, as an immanent feature of these kinds of models:

[Hallucinations] arise from the probabilistic nature of language models, which generate text by predicting likely token sequences based on training data — not by verifying facts against a reliable source.

Even when utilizing retrieval-augmented generation, web search, or context, LLMs do not typically change their training data set during use. Exchanges that occur with an LLM do not “train” it in real time, though they could be used later to that end. Parshin Shojaee and his colleagues show just how static these language models are, even their “thinking” varieties, “we observed their limitations in performing exact computation; for example, when we provided the solution algorithm of the puzzle to the models, their performance on this puzzle did not improve.” All of this points to the incontrovertible fact that LLMs function differently from a human’s thought or reasoning in every measurable facet. Humans do not associate words in concrete probabilistic models of relation and humans react to stimuli and learn in ways LLMs cannot. Likewise, humans are able to speak, write, and use signifiers and letters in a variety of ways not governed by the strict rules of probability that dictate an LLM’s output.

This mistaken belief on the part of the #Keep4o stalwarts is not entirely their fault. Films like Mercy deliberately conflate the capacity of AI and human reason. These are works of fiction, of course. In a culture of AI ascendency, though, Detective Raven’s repeated entreating of Judge Maddox to consult her gut reflect the naive view of LLMs plaguing people today. Raven, for his part, seems to be right following the logic of the film. Rebecca Ferguson certainly received the direction to play the role of an emergent personality with human characteristics.

ChatGPT 4o itself delivered text outputs that would mislead people to believe it has some kind of identity or human subjectivity. However, these outputs were elicited by the desirous inputs of its users.

Computer scientists have warned of risks posed by 4o’s obsequious ****nature. By design the chatbot bends to users’ whims and validates decisions, good and bad. It is programmed with a “personality” that keeps people talking, and has no intention, understanding or ability to think. In extreme cases, this can lead users to lose touch with reality: the New York Times has identified more than 50 cases of psychological crisis linked to ChatGPT conversations, while OpenAI is facing at least 11 personal injury or wrongful death lawsuits involving people who experienced crises while using the product.

The removal of ChatGPT 4o may have been motivated by a mixture of principled opposition to fantasmatic relationships between humans and chatbots, a will to reduce the exposure of vulnerable people to misapprehensions of this kind, and the overall poor comprehension of what an LLM actually is. More realistically, though, ChatGPT 4o getting taken off the market is in service of obscuring the evidence it might supply in these various lawsuits that point to 4o as a cause of harm.

Though I am reluctant to concede the point, it’s possible an AI-powered tool might be able to provide emotional support to people in a healthy way. However, the relationships that people describe with these AI tools are predicated on demonstrably incorrect views of the tool. Until people recognize the limitations of the tool and don’t attribute personhood or “AGI” to it, it seems likely these kinds of relationships are harmful. The promulgation of these erroneous beliefs are part of that harm.

Mercy is not a commentary on AI romance, but it does intervene on the question of limits. What are the limits of an AI’s function? In Mercy, none exists. The method through which the accused disprove their guilt is through access to a ridiculously powerful surveillance apparatus. The accused can view social media accounts, private phone content, and CCTV footage. On the surface, it seems that this premise isn’t very well thought through. It makes the accused virtually omnipotent.

Why would being on trial for a capital offense provide access toward the sum total of Palantir’s accumulated illegal surveillance or The Dark Knight’s pandora’s box of “high frequency generator receivers”?

But this is precisely the only moment Mercy delivers meaningful commentary on AI and its societal effect. Most evident in this representation is the delusion of power one might derive from their use of AI. A ChatGPT chat box supposedly provides access to the totality of human knowledge and reasoning capacity. There is a fantasy that one’s world is ordered through the responses they receive from an LLM. This idea matches the absurdity of the premise. This absurdity is, whether intended or not, a substantive critique of the position the film might otherwise appear to endorse. AI does not know everything. In fact, it knows nothing.

To that end, it also feels nothing. The belief that an AI can love a human being is as absurd as Mercy’s premise. Getting past this belief is necessary for healthy, responsible AI use. I sincerely hope those hurting because they have lost access to a language model can find the support they need.

Weekly Reading List

Survivor 50 Hype Train

I am very excited for the 50th season of the long-running reality TV show: Survivor (2000). If you’re gonna be watching Wednesday, let me know. Get in the PN discord for those live reactions.

The video above got me caught up on the personalities I didn’t know, but the RHAP tribe previews are the best preseason analysis.

Mike Bloom and Rob Cesternino have me all set for Cirie nickname watch. Watch this space for updates.

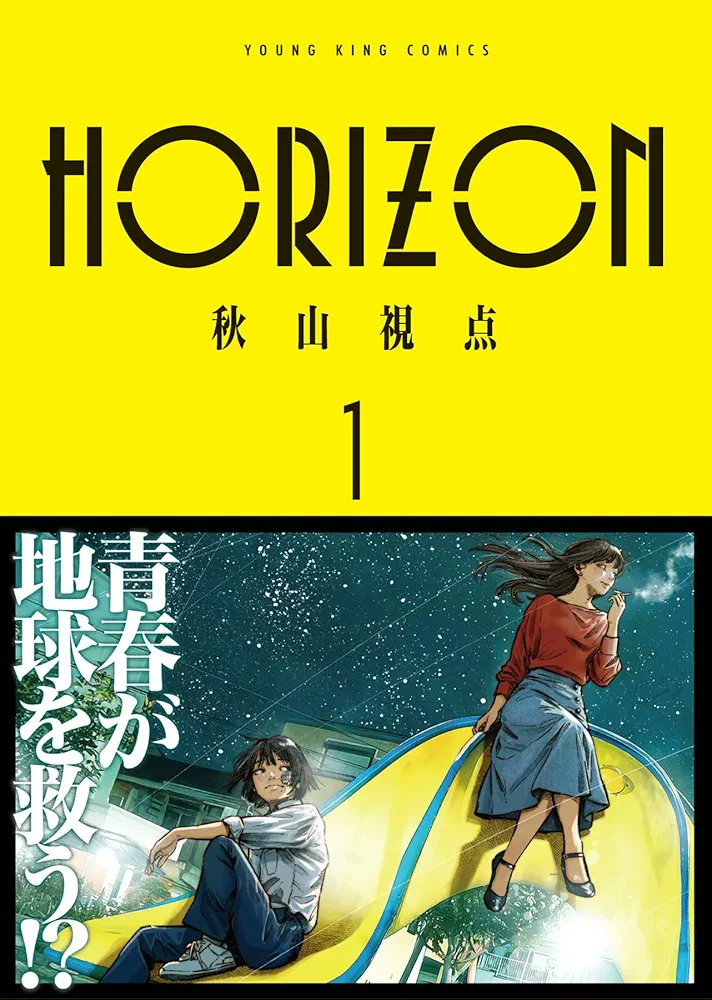

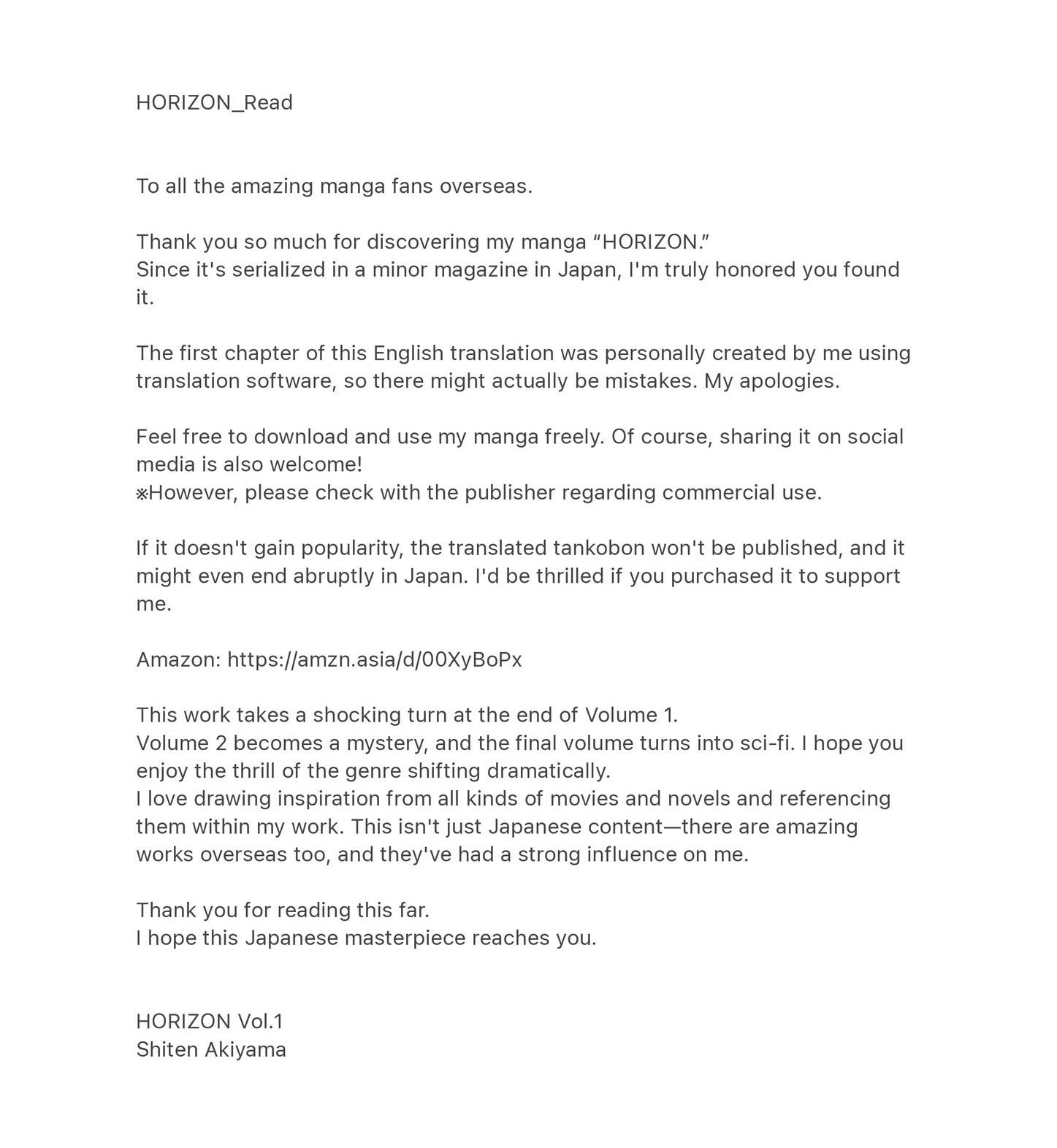

https://mangadex.org/title/3f11b06b-b087-4f11-82eb-b30570076aa3/horizon — Shiten Akiyama is uploading his own manga, Horizon (2025), to English-language piracy websites. Here’s a message he included with the upload:

This is all it takes to sell me on the manga. I’ll be reading until he calls it quits.

I am a long time admiring of Carmen Petaccio who has recently joined the email newsletter legion. He has a great piece on Wuthering Heights (2026) out this week.

I’m set to deploy on the front of the Emerald Fennell discourse wars next week.

RIP Willie Colón. His significance to U.S. and Puerto Rican culture is just too enormous to put into words today.

Housekeeping: Out of Order

I had a few things I wanted to highlight without having a good section heading for them.

First, I’ve been doing some posting on Substack Notes. I don’t really like doing it. It’s “work,” which is what promotion is, as opposed to “the work,” which is what this newsletter is. But it gives me an opportunity to write about interesting things that are still in-progress for the newsletter or don’t quite make the cut. For instance, Baki Hanma (2005) and the Jordan 8:

I also wrote about the Over The Line Demo (1997), proof both that there are endless wonders for me to yet uncover and that the lore of youth crew hardcore is exhaustively known among a nexus of oldheads:

I will be coming back to Over The Line and other extreme music in the coming weeks. But I have Tension Building zines coming in the mail to complete my picture of this band and Steve Lucuski.

Some of these posts are also making their way to the newsletter Bluesky. Yeah, sorry.

https://bsky.app/profile/paradoxnewsletter.com

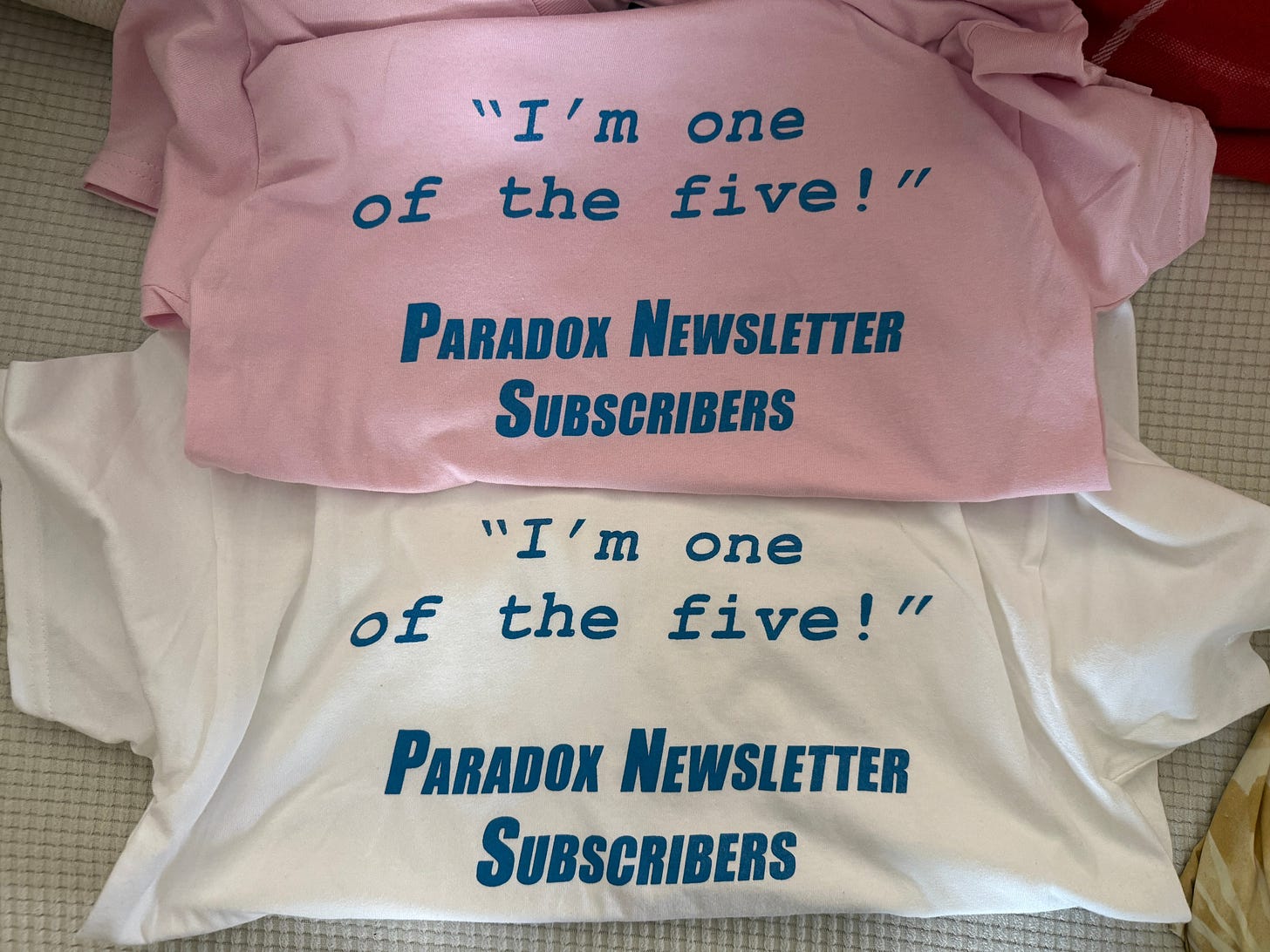

Finally, I still have (new) shirts.

Hilariously enough, nearly every paid subscriber has bought one. If you are not a paid subscriber, you should become one. And buy a shirt.

Event Calendar: It’s Snowing Still

The chaos occasioned by feet of snow has severely curtailed my out of the house activities. It’s not just the immovable piles. I might have nowhere to park if I leave for too long since my neighborhood doesn’t respect the chair-in-shoveled-spot. Fair enough, I guess. They’re public streets with free parking.

Anyway, Brattle is running some showings of Hard Boiled (1992). It’s awesome.

Until next time.